Rising AI Deepfake Threat Spurs Global Regulatory Crackdown and Industry Safeguards

May 6, 2026

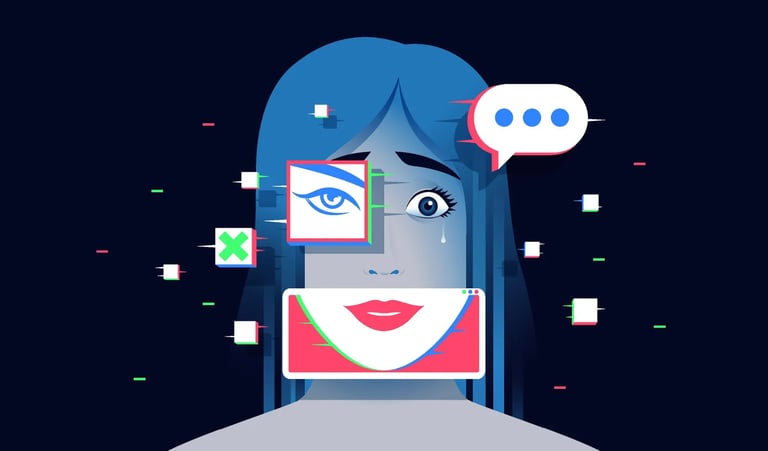

The backlash against generative AI centers on who owns a person’s image, voice, and likeness, not just the technology, as copying and deepfakes enable endorsement and scam use without permission.

Regulatory momentum is building with measures like the NO FAKES Act in the U.S. and Italy’s Law No. 132 criminalizing unlawful AI-generated content, alongside Tennessee’s ELVIS Act protecting performers’ voice rights.

Practical enforcement is being discussed, including authenticating and watermarking AI content, providing provenance proofs, and deploying robust detection to curb spread before it harms victims.

Platforms face mounting liability and enforcement pressures to prevent deepfake distribution, potentially shifting responsibility beyond simple takedown duties.

A developing market for permission-based AI usage is emerging, calling for rights registries, licensing, voice authentication, takedown systems, and expanded insurance and contract frameworks in entertainment and advertising.

Celebrities and brands are already bearing financial and reputational costs from fake ads, manipulated interviews, and misused endorsements.

The core issue is that AI lowers the cost and expands reach for impersonation, making governance of synthetic media more urgent and multifaceted.

Deepfakes are increasingly a commercial risk, enabling fake endorsements, fraudulent promotions, and misleading content that can damage brands, individuals, and business relationships.

Summary based on 1 source

Get a daily email with more Tech stories

Source

Forbes • May 6, 2026

The Next AI War Is Over Who Owns Your Identity