NanoClaw 2.0 Enhances AI Automation Safety with Human-In-The-Loop Oversight for High-Stakes Tasks

April 17, 2026

In a bid to bring safer AI automation to everyday workflows, NanoClaw 2.0 debuts under NanoCo and partners with Vercel and OneCLI to introduce infrastructure-level approval dialogs for agent actions across messaging apps.

The project broadens human-in-the-loop oversight so AI agents performing sensitive tasks on Slack, WhatsApp, and Microsoft Teams require explicit human confirmation before proceeding.

A unified security layer immediately revokes permissions after an approved action completes, ensuring agents cannot bypass safeguards for high-stakes operations.

Key use cases include high-stakes DevOps tasks like phased cloud infrastructure changes and batch finance tasks such as payments, all requiring prior human approval.

Security by isolation is central: agents run in isolated containers with placeholder keys, while a Rust-based Gateway enforces policies and pauses sensitive actions for human authorization before real credentials are exposed.

For enterprises, the framework offers a middle ground between automation and control, delivering high auditability, least-privilege operation, and human-in-the-loop governance that treats agents as supervised junior staff members.

NanoClaw operates on users’ devices via Docker to isolate agent sessions and enforce explicit permission boundaries at the infrastructure layer.

The 2.0 release emphasizes infrastructure-level enforcement over app-level permissions to prevent agent manipulation and ensure the approval workflow cannot be bypassed.

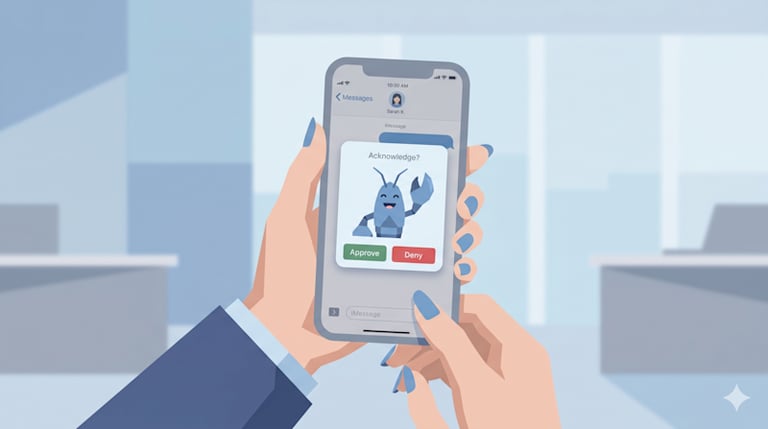

Vercel’s ChatSDK presents interactive native approval cards in users’ chat apps, prompting taps to approve actions like payments or resource deletions before execution.

OneCLI’s credential vault encrypts and safeguards credentials, injecting authentication into workflows only at approval time so credentials are never exposed to the agent.

Founder Gavriel Cohen argues this trust layer allows AI agents to handle high-stakes tasks more safely, mitigating risks from unrestrained access and AI hallucinations.

The collaboration aims to unlock productivity gains from AI automation by enabling agents to manage high-stakes information under strict oversight and access controls.

Summary based on 2 sources

Get a daily email with more Tech stories

Sources

SiliconANGLE • Apr 17, 2026

NanoClaw partners with Vercel to deliver one-click approvals for AI agents working on sensitive tasks