Nvidia Unveils Game-Changing BlueField-4 STX, Revolutionizing AI Inference with Speedy KV Cache Management

March 16, 2026

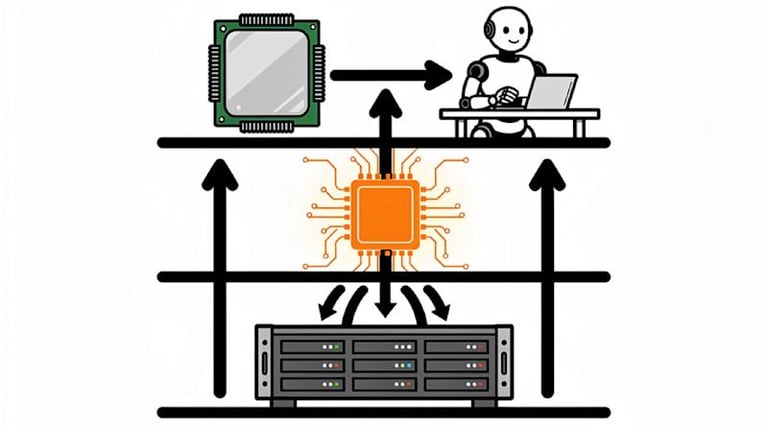

Nvidia introduced BlueField-4 STX at GTC 2026 as a modular reference architecture that inserts a dedicated context memory layer between GPUs and traditional storage to speed up AI inference by managing KV cache data.

A broad partner ecosystem, spanning storage incumbents and AI-native cloud providers, is expected to roll out STX-enabled platforms from partners in the second half of 2026.

STX is a reference design rather than a commercial product, distributed to storage partners to enable AI-native infrastructure development, with Nvidia supplying both hardware reference design and a software reference platform under DOCA, plus a new DOCA Memo component.

IBM is a notable collaborator, integrating IBM Storage Scale System 6000 with Nvidia DGX deployments and highlighting accelerated data workflows, including a Nestlé case study showing production gains in data-refresh workloads.

Performance claims for STX include up to five times token throughput, four times better energy efficiency, and double the data ingestion speed versus CPU-based storage, though exact baseline configurations were not disclosed.

Nvidia positions the storage layer as a first-class infrastructure decision for enterprise AI, arguing traditional NAS/object storage cannot meet inference latency for KV cache, with STX providing a programmable DOCA-based foundation for agentic workloads.

CMX represents the first rack-scale implementation, extending GPU memory with a high-performance context layer for KV cache storage and retrieval to avoid round-trips through general-purpose storage.

The STX architecture targets the KV cache bottleneck to enable faster retrieval of intermediate model computations, supporting multi-step reasoning, tool calls, and session continuity.

STX-based systems are slated to be available from partner vendors in the second half of 2026, marking a shift toward AI-optimized storage in enterprise deployments.

Summary based on 1 source

Get a daily email with more Tech stories

Source

VentureBeat • Mar 16, 2026

Nvidia BlueField-4 STX adds a context memory layer to storage to close the agentic AI throughput gap